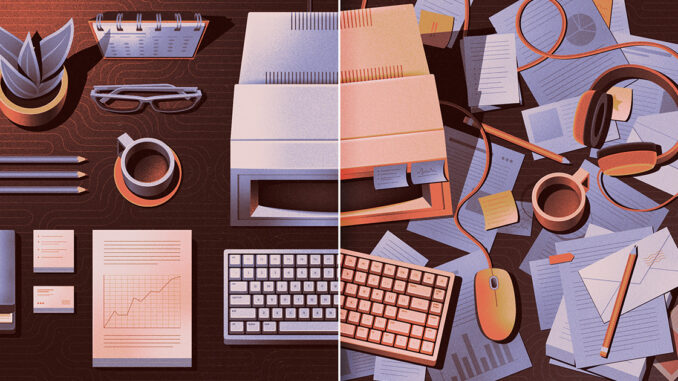

Just as there is no single best way to organize a library, there is no one-size-fits-all solution for storing information.

Consider a simple situation where you create a new digital file. Your computer needs to quickly find a place to put it. If you later want to erase it, the machine has to quickly find the right bits to erase. The goal of scientists is to design storage systems, called data structures, that balance the time it takes to add data, the time it takes to remove it later, and the total amount of memory the system needs.

To visualize these challenges, imagine that you have all your books lined up on one long shelf. If they are arranged alphabetically, you can quickly select any book. But whenever you get a new book, it will take some time to find the right place for it. Conversely, placing books wherever there is space will save time now, but they will be hard to find later. This trade-off between insertion time and lookup time might not be a problem for a single-shelf library, but you can see how it could be cumbersome with thousands of books.

Instead of a shelf, you could set up 26 alphabetically labeled bins and assign books to bins based on the first letter of the author’s last name. Whenever you get a new book, you can instantly see which bin it’s going into, and whenever you want to get a book, you’ll instantly know where to look for it. In certain situations, loading and unloading can be much faster than if you were to store items on one long shelf.

Of course, this bin system has its own problems. Book downloads are instant only if you have one book in your bin; otherwise, you’ll have to dig around to find the right one. In the extreme scenario where all your books are Asimov, Atwood, and Austen, you’re back to the problem of one long shelf, plus a bunch of empty bins taking up your living room.

Computer scientists often study data structures called hash tables, which resemble more sophisticated versions of this simple bin system. Hash tables calculate the storage address for each item from a known property of that item, called a key. In our example, the key for each book is the first letter of the author’s last name. But with this simple key, some bins are likely to be much fuller than others. (Few authors writing in English have a surname that begins with

This kind of mathematical rule for transforming a key into a storage address is called a hash function. A cleverly designed hash function ensures that items will usually be split relatively evenly between stacks, so you don’t have to spend as much time searching through each stack.

If you want to reduce the load time further, you can use multiple stacks. But that leads to another trade-off: These bins take up space even when they end up empty.

This trade-off between space and time is inherent in hash tables—it’s the price you pay to avoid the tension between insert and load times that plagues simpler data structures. More than 70 years after hash tables were invented, computer scientists are still discovering new things about their fundamental properties. Recently, they finally came up with a version that strikes an ideal balance between space and time. And last year, a college student disproved a long-held assumption about the minimum time needed to find a particular entry in an almost full hash table.

A pile of priorities

Hash tables work well when you can’t predict which piece of data you’ll need to retrieve next. But this is not always the case. Imagine trying to complete the tasks on your to-do list, but you are constantly being assigned new tasks with different deadlines. You want to be able to quickly add new items to your to-do list, but you don’t care about loading items until they become your top priority.

In this case, the best choice is a type of data structure called a heap. As the name suggests, a heap is a somewhat haphazard approach to data storage. It’s basically the mathematical version of stacking things: Some items are stored above others, and those higher items are easier to access. The item with the highest priority is always at the top of the pile, where you can grab it immediately. Lower layers will be more cluttered, but you don’t have to worry about the relative position of these low-priority items.

The simplest implementation of this basic idea uses a mathematical object called a binary tree, which is a network of nodes with a special shape: There is one node at the top, and each node is connected to two nodes directly below it.

Let’s imagine a binary tree that contains items in a to-do list. Each node can store one item, and each item is labeled with a number that represents its due date. High priority items have smaller numbers.

Mark Belan/Quanta Magazine

Each new item is placed in an empty position in the current lowest layer.

As a new item enters, compare its due date with the date of the item in the node directly above it. If the new task is due earlier, exchange the items. Continue swapping until the new item ends up directly below the more urgent item.

This procedure ensures that the item with the highest priority will always rise to the top. In addition, the procedure is extremely fast. Even in the nightmare scenario where you have 1,000 tasks on your to-do list and you’re constantly getting new tasks, stacking them ensures that it takes no more than nine exchanges to move each new item to its appropriate position. Whenever you complete the most urgent task and remove it from the pile, you can quickly retrieve your new highest priority from the layer below.

In computer science, heaps are widely used in algorithms for finding the shortest path from a given starting point in a network to every other point. In 2024, a team of researchers used an ingenious new heap design to transform the classical shortest path algorithm into one that is theoretically optimal for any network layout.

There is no shortage of self-help books full of conflicting advice on how to best organize your belongings. If computer science offers any lessons, it’s that there is no perfect solution—every approach comes with trade-offs. But if some items are more important to you than others, don’t be afraid to leave them a little messy.

Leave a Reply